Introduction

Anthropic has launched the Advisor Tool, which fundamentally changes the logic of AI task execution. Instead of the traditional model where larger models direct smaller ones, the Advisor strategy allows smaller models to consult larger models during execution. This innovation enables Sonnet/Haiku to seek guidance from Opus at critical decision points, achieving intelligence close to Opus while only incurring the costs of a smaller model.

The Advisor Tool allows Sonnet or Haiku to automatically consult Opus when faced with challenging decisions, continuing their tasks after receiving guidance. This strategy is referred to as the Advisor Strategy.

Reverse Sub-Agent Model

The common multi-agent model in the industry positions larger models as commanders, delegating tasks to smaller models. The Advisor strategy reverses this approach.

Sonnet (or Haiku) as Executor executes tasks throughout, calling tools, reading results, and iterating. When it encounters a decision point where its judgment is insufficient, it consults Opus as an Advisor. Opus receives the shared context and returns a plan, correction, or stop signal, after which Sonnet continues execution.

The Advisor does not call tools or produce user-facing outputs; it only provides guidance. Advanced reasoning intervenes only when the Executor needs it, and the entire process is billed at the Executor’s rate.

This design eliminates the need for task decomposition logic, worker pools, and orchestration frameworks. The Executor determines when to upgrade, and the entire process is completed in a single API call.

Performance Data

Let’s examine the combination of Sonnet + Opus Advisor.

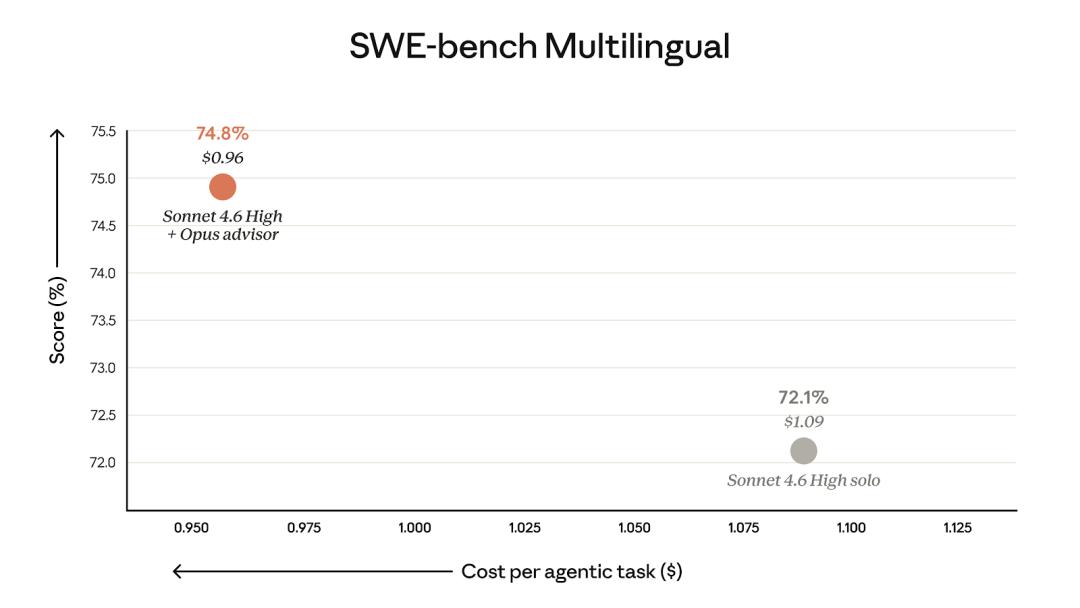

SWE-bench Multilingual

Sonnet + Advisor improved performance by 2.7 percentage points compared to Sonnet running solo, while reducing the cost per task by 11.9%. The cost reduction is attributed to the Advisor’s intervention, allowing the Executor to avoid unnecessary detours and reduce total token consumption.

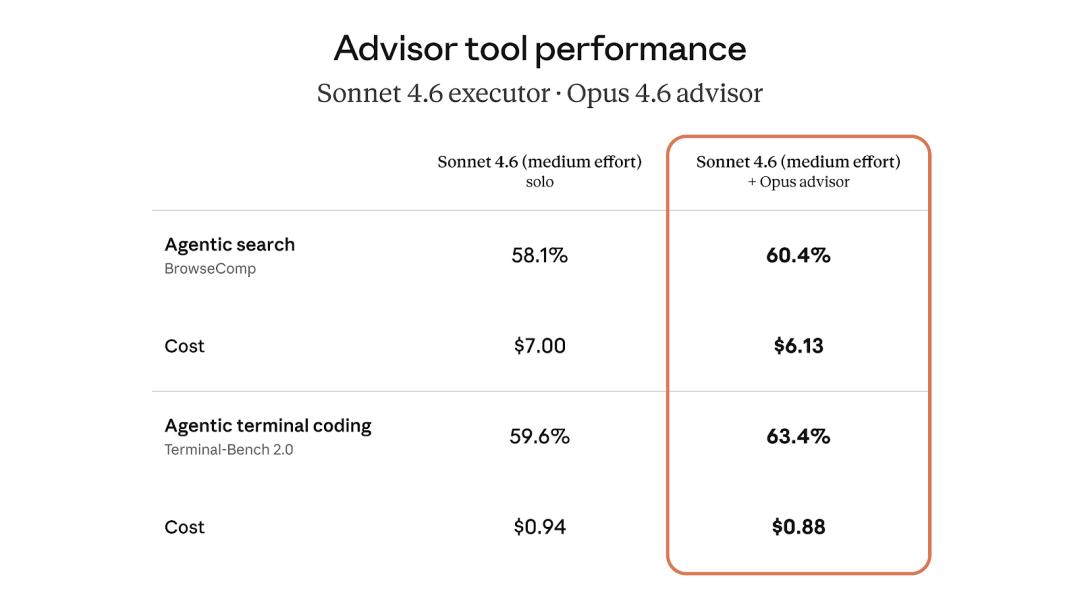

BrowseComp and Terminal-Bench 2.0

In BrowseComp and Terminal-Bench 2.0, Sonnet + Advisor also outperformed Sonnet running solo, with lower costs per task.

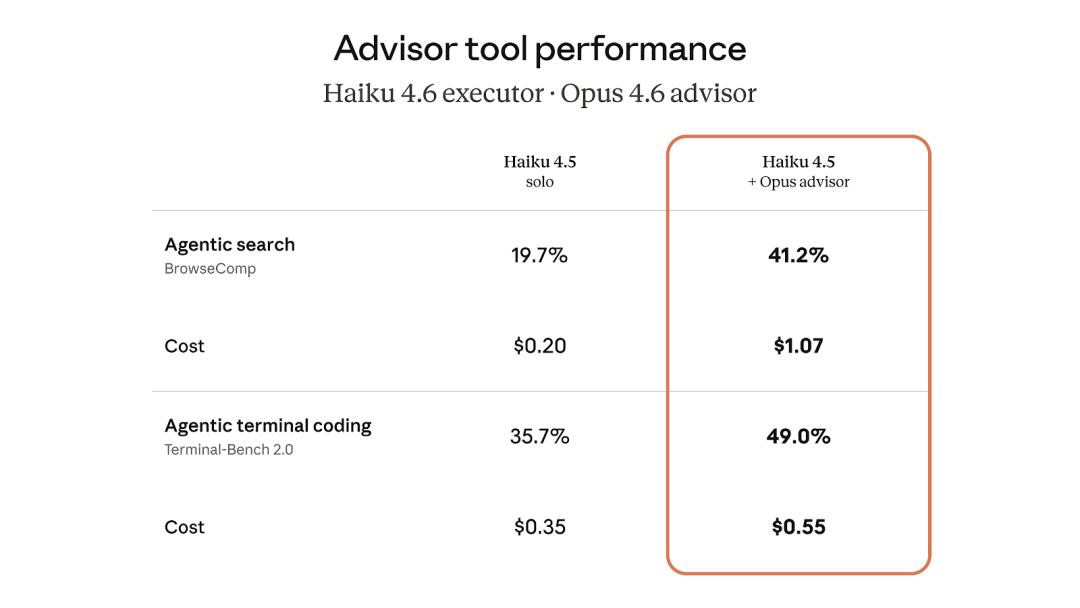

Next, let’s look at the combination of Haiku + Opus Advisor, which is even more interesting.

In BrowseComp, Haiku + Advisor scored 41.2%, more than double Haiku running solo (19.7%). Compared to Sonnet running solo, the score is 29% lower, but the cost is reduced by 85%.

For high-throughput scenarios that require balancing intelligence and cost, this combination is very attractive. It achieves results close to Sonnet’s level at Haiku’s price.

How to Use

From an API perspective, it’s very simple. Add an advisor_20260301 type tool to the tools array in the Messages API request, specify the Advisor model as Opus, and set a max_uses limit to control how many times to consult per request.

The entire model handoff occurs within a single /v1/messages request, eliminating the need for additional network round trips and context management. The Executor decides when to call the Advisor, and Anthropic routes the selected context to the Advisor model, allowing the Executor to continue executing after receiving the plan.

Billing is based on the Advisor’s token at the Advisor model’s rate (Opus at $5/$25), while the Executor’s tokens are billed at the Executor model’s rate (Sonnet at $3/$15 or Haiku at $1/$5). Since the Advisor typically generates a short plan (usually 400-700 tokens), the overall cost is much lower than running Opus throughout.

You can control costs by limiting the number of Advisor calls with max_uses. The token consumption of the Advisor is reported separately in usage.

Early User Feedback

-

“Better architectural decisions on complex tasks, with no extra overhead on simple tasks. The planning and execution trajectories are completely on different levels.”

Eric Simmons, CEO of Bolt -

“We have seen clear improvements in agent rounds, tool call counts, and overall scores, better than our own planning tools.”

Kay Zhu, Co-founder and CTO of Genspark -

“In structured document extraction tasks, Advisor allowed Haiku 4.5 to consult Opus 4.6 on demand, achieving state-of-the-art model quality at a cost 5 times lower.”

Anuraj Pandey, Machine Learning Engineer at Eve Legal

Key Signals

-

This is Anthropic’s first native support for inter-model collaboration at the API level. Previously, to coordinate Sonnet and Opus, one had to write orchestration logic, manage context passing, and handle the state of two API calls. Now, a single tool declaration suffices.

-

The pricing logic is clever. The Advisor outputs only 400-700 tokens per call, costing just a few cents at Opus’s rate. However, this small investment in guidance allows the Executor to avoid detours, reducing total token consumption. This explains the phenomenon where adding Advisor actually lowers total costs.

-

The Haiku + Opus Advisor combination is noteworthy. The 41.2% score in BrowseComp at Haiku’s price is 85% cheaper than running Sonnet solo. This combination may be more suitable for large-scale, cost-sensitive agent deployment scenarios.

-

The timeline continues to accelerate. With the release of Mythos, Managed Agents, and the Advisor Tool within a week, Anthropic’s product line density is rapidly increasing.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.